Part 2 : Container orchestration using Kubernetes

In the second part of our tech basics series on Containers, Microservices & Kubernetes , we will talk about container orchestration and Kubernetes. If you are new here, do check out Part 1 of this blog series first!!

Why do you need container orchestration?

Running a single application in a container might look simple and straight forward. However in the real world, there will be multiple other services that the application needs to talk to. The application should scale based on the capacity requirements. There should be a control plane that is capable of orchestrating these connectivity requirements, scaling, scheduling and lifecycle management of containers. That is where container orchestration comes into picture. Container orchestration solutions like Docker swarm, Mesos and Kubernetes offer a centralized tool for managing containers, scheduling and scaling containers. Of the many container orchestration platform Kubernetes is the most popular and widely used one. All leading cloud platforms offer Kubernetes as a managed service, which helps speed up the onboarding process on the platform

Understanding Kubernetes architecture

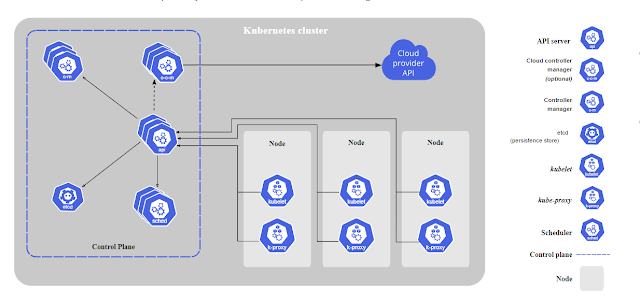

Kubernetes also known as K8s consist of worker machines nodes and a control plane. Nodes could be a physical or virtual machine with Kubernetes installed. In a Kubernetes cluster there should be multiple nodes to ensure high available and load sharing. The control plane usually consists of one or more master nodes that has Kubernetes installed and takes care of container orchestration on member nodes. The different components of the container cluster are as shown below:

K8s components*

kube-apiserver: It is the front end of K8s control plane and exposes the Kubernetes API. All users and management services connect to this component and it runs on the master nodes

etcd : This is the key-value store of K8s , which stores information about the cluster. It is distributed and highly available and stores information about the masters and nodes in a cluster . This information is stored in all nodes of the cluster in a distributed manner and ensures that there are no conflicts especially when there are multiple master

kubelet: It is an agent that ensures that containers run as expected in all servers. It runs on all worker nodes in the cluster

Container Runtime: It is the container run time that is required for deploying containers. It can be Docker or any other container run time like rkt or CRI-O

controller: This component ensures that the cluster is adhering to the desired state. It manages the orchestration process . For eg: when nodes or endpoints go down the containers are redeployed in a different node by the controller

kube-scheduler : This component on the master nodes decides on which nodes in the cluster the container should be deployed. The nodes are selected based on pre-defined policies , constraints, affinity or anti-affinity etc.

kube-proxy : This components handles the networking of the cluster . It is a networking-proxy where different rules that govern the networking patterns of the cluster is maintained. Any ingress or egress traffic of the pods are managed by the kube-proxy and the service runs on worker nodes

*Image courtesy : https://kubernetes.io/docs/concepts/overview/components/

Comments

Post a Comment