I am starting a set of new blog series to help those who are new to cloud technology - junior engineers, tech aspirants & students etc. I will try to explain the basics in simple terms that will help you develop a good foundation of the latest and greatest in cloud technologies. If you are a seasoned cloud expert, this series will act as a good refresher course!

We will kick off with a series on containers, Microservices & Kubernetes. After covering the basics we will move on to move advanced topics on how you can build and deploy containerized applications on various cloud platforms

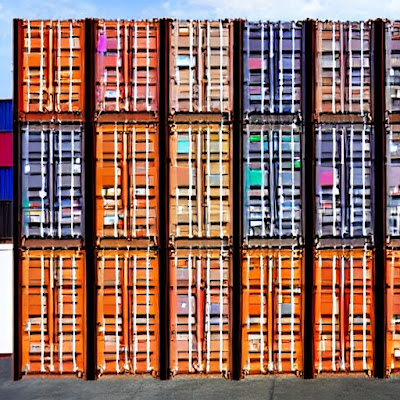

Part 1 - Containers

What are containers?

Containers bundle the application code, its dependencies and configurations required to run the application in a single unit. There are different container technologies available - Docker, Containerd, rkt and LXD. The most popular container technology is Docker . Containers are a form of operating system virtualization, where multiple applications can run in the same host but isolated from each other. Each application running in a container will have access to its own network resources, mountpoints, file system etc. A Docker container image will consist of a base image, customized by adding additional application code, its dependent libraries and configuration files

Containers come packaged with everything that it needs to run and can be spinned up in matter of seconds. using a container image. Container images can be stored in a centralized repository called as container registry. There are several managed container registries - Docker hub, Google container registry, Azure container registry etc. These registries can either be public - accessible to all, or made private restricting it to people in an organization or group. Containers are hugely popular as they can run on any platform which supports container technology ie Linux, windows or Mac OS. As they have a very small footprint as they are ephemeral and use less CPU and Memory resources, you can create multiple replicas of the container as per your scaling requirements

Why do you need containers?

Where there are multiple applications running on the same operating system, there is always a requirement to ensure compatibility between all the libraries and the underlying operating system. The same process has to be followed whenever any of the related components are upgraded or changed. Different environments like Dev, test and production environments could also use different versions of the software. Managing all these at scale can be a challenge delaying the application development and deployment timelines

With containers all these applications could be run in different environments (containers). By creating docker configuration specific to each environment, it becomes easy to build and deploy different environments at scale and manage their dependencies independently. Once packaged into an image, the application will continue to work the same way irrespective of where it is deployed

How does containers work?

Docker uses LXC containers in the backend , abstracting it and making it easy to deploy and manage containers. Operating systems consist of OS kernel and software sitting on top of it. Docker offers OS virtualization where the OS kernel is shared between different applications. Each container runs in its own independent namespace with access to its own filesystem, processes, libraries and other files . If the OS has a Linux kernel, Docker can support different flavors of Linux , for eg: Ubuntu, Suse, CentOS etc. However it cannot support containers in the same host that need a different kernel, for eg: Windows

How are containers different from virtual machines?

Virtual machines uses a hypervisor software for virtualizing the underlying hardware. Each virtual machine will have its own set of virtualized hardware - CPU, Memory, Storage and NIC cards. You can run different OS in the same virtualization host , i.e., Windows and Linux , as there is no OS sharing between two VMs in a virtualization platform. Containers on the other hand does not provide a strong isolation like virtual machines. With containers its the processes, file system and networking that is isolated. However VM are heavier, i.e. , they need full operating system kernel , device drivers and everything that is required to run the machine. Containers on the other hand just need the resources required to run the applications. Because of this the start up time of containers are faster when compared to virtual machines

Comments

Post a Comment